The last heartbeat was received 1 minute ago. I have later found an error on the UI: The scheduler does not appear to be running. I have port-forwarded from the web server's pod to my machine, however, when I try to run the example DAGs, the tasks seem to be stuck in running. I have been successful in deploying airflow (using the bitnami chart but also had the same issue with other charts), and by running kubectl get pods I get: NAME READY STATUS RESTARTS AGEīitnami-release-airflow-scheduler-774d647447-j6vpd 1/1 Running 0 4m6sīitnami-release-airflow-web-5897c99754-hq6nr 1/1 Running 0 4m6sīitnami-release-airflow-worker-0 0/1 Running 0 4m6sīitnami-release-postgresql-0 1/1 Running 0 4m6sīitnami-release-redis-master-0 1/1 Running 0 4m6s set postgresql.postgresqlPassword=$POSTGRESQL_PASSWORD \ set auth.fernetKey=$AIRFLOW_FERNETKEY = \

#AIRFLOW HELM CHART INSTALL#

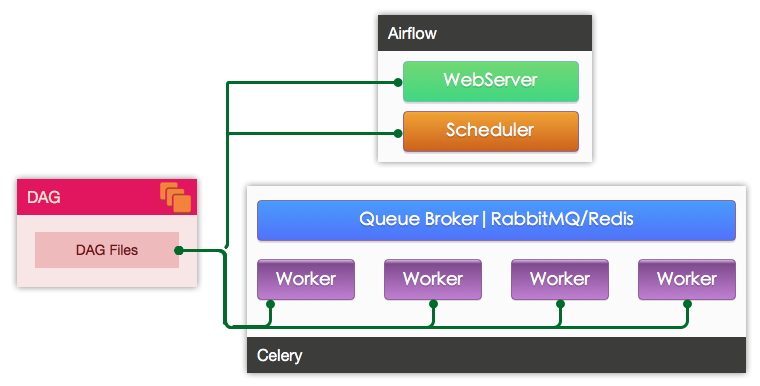

I have installed the chart like: helm install airflow bitnami/airflow \ As a start, I am primarily focused on deploying the example dags. My objective is to deploy Airflow on Kubernetes (I am using AWS EKS) with CeleryExecutor and using Helm charts. The easiest way to do this is to login to the Azure Portal open a cloud shell and connect to the postgres database with your admin user.I am still learning about Airflow. We can then proceed to create the user and database for Airflow using psql.

The Azure Container Registry (ACR) would serve that purpose very well. This does involve creating your own image and pushing it to your container registry. The easiest solution to this problem is to modify the Dockerfile and completely remove the ENTRYPOINT and CMD line. Since this blog post uses an external postgres instance we must use SSL encryption. The issue is that it expects an unencrypted (no SSL) connection by default. It creates the AIRFLOW CORESQL_ALCHEMY_CONN value given the postgres host, port, user and password. Now, the docker image used in the helm chart uses an entrypoint.sh which makes some nasty assumptions: Choosing this managed database will also take care of backups, which is one less thing to worry about. All state for Airflow is stored in the metastore. If you don’t have one, the quick start guide is your friend.

#AIRFLOW HELM CHART FULL#

To make full use of cloud features we’ll be connected to a managed Azure Postgres instance.

Note that the latter one only works if you’ve invoked the former command at least once. To make sure this is possible set the following value in airflow-local.yaml: If the executor does not have access to the fernet key it cannot decode connections. The fernet key in Airflow is designed to communicate secret values from database to executor.

Note: this guide was written using AKS version 1.12.7. It is assumed the reader has already deployed such a cluster – this is in fact quite easy using the quick start guide. The executor also makes sure the new pod will receive a connection to the database and the location of DAGs and logs.ĪKS is a managed Kubernetes service running on the Microsoft Azure cloud. It does so by starting a new run of the task using the airflow run command in a new pod. The kubernetes executor for Airflow runs every single task in a separate pod. Kubernetes Executor on Azure Kubernetes Service (AKS) We’ll be modifying this file throughout this guide.